Deep Learning

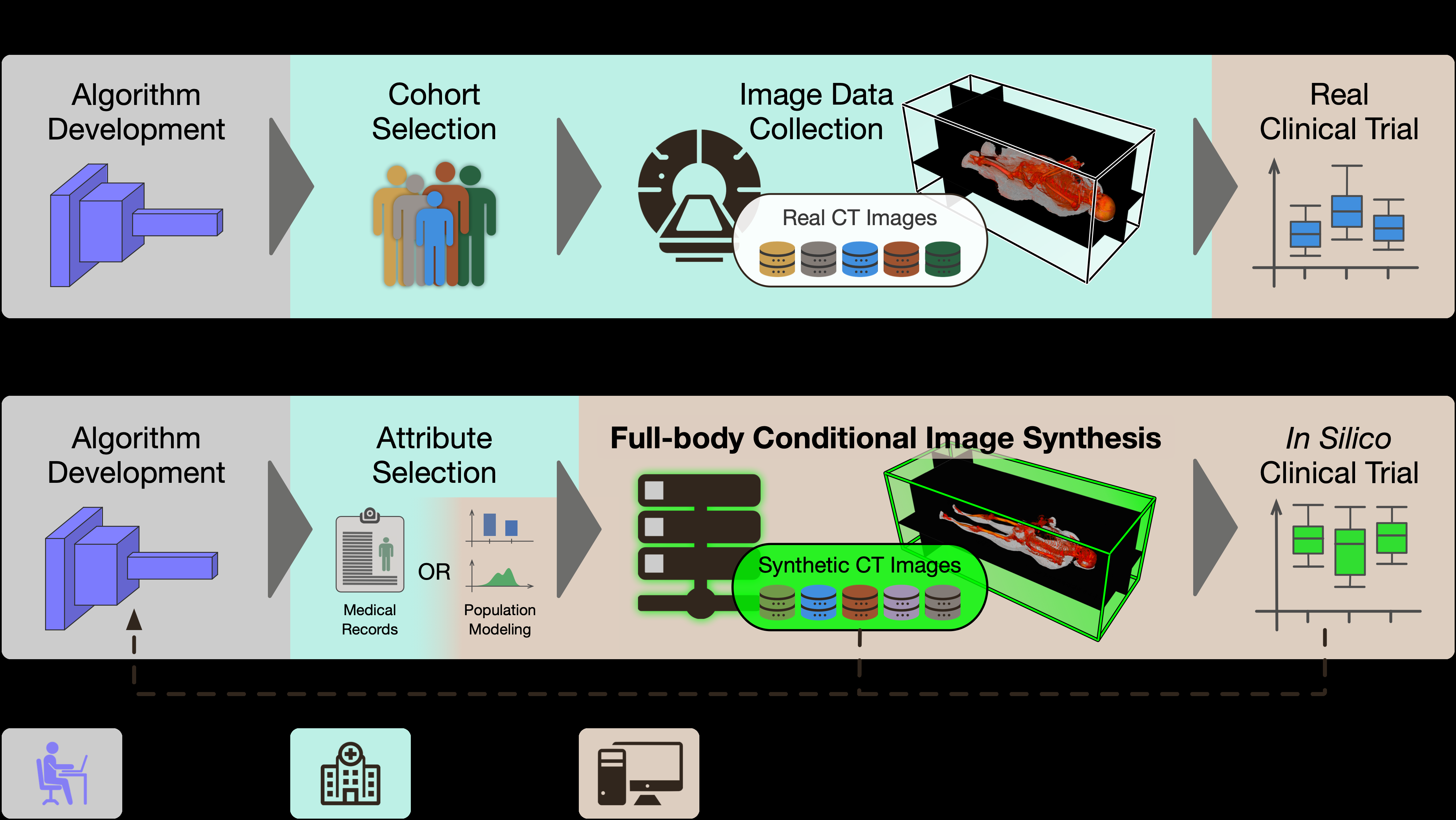

Towards Virtual Clinical Trials of Radiology AI with Conditional Generative Modeling

We propose a framework for conducting virtual clinical trials of radiology AI systems using conditional generative models to synthesize realistic medical imaging scenarios for comprehensive AI evaluation.

B. D. Killeen*, Bohua Wan*, A. V. Kulkarni, N. Drenkow, M. Oberst, P. H. Yi, M. Unberath

arXiv preprint arXiv:2502.09688 (2025).

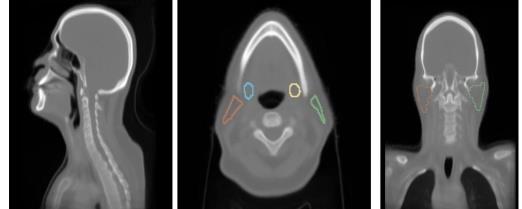

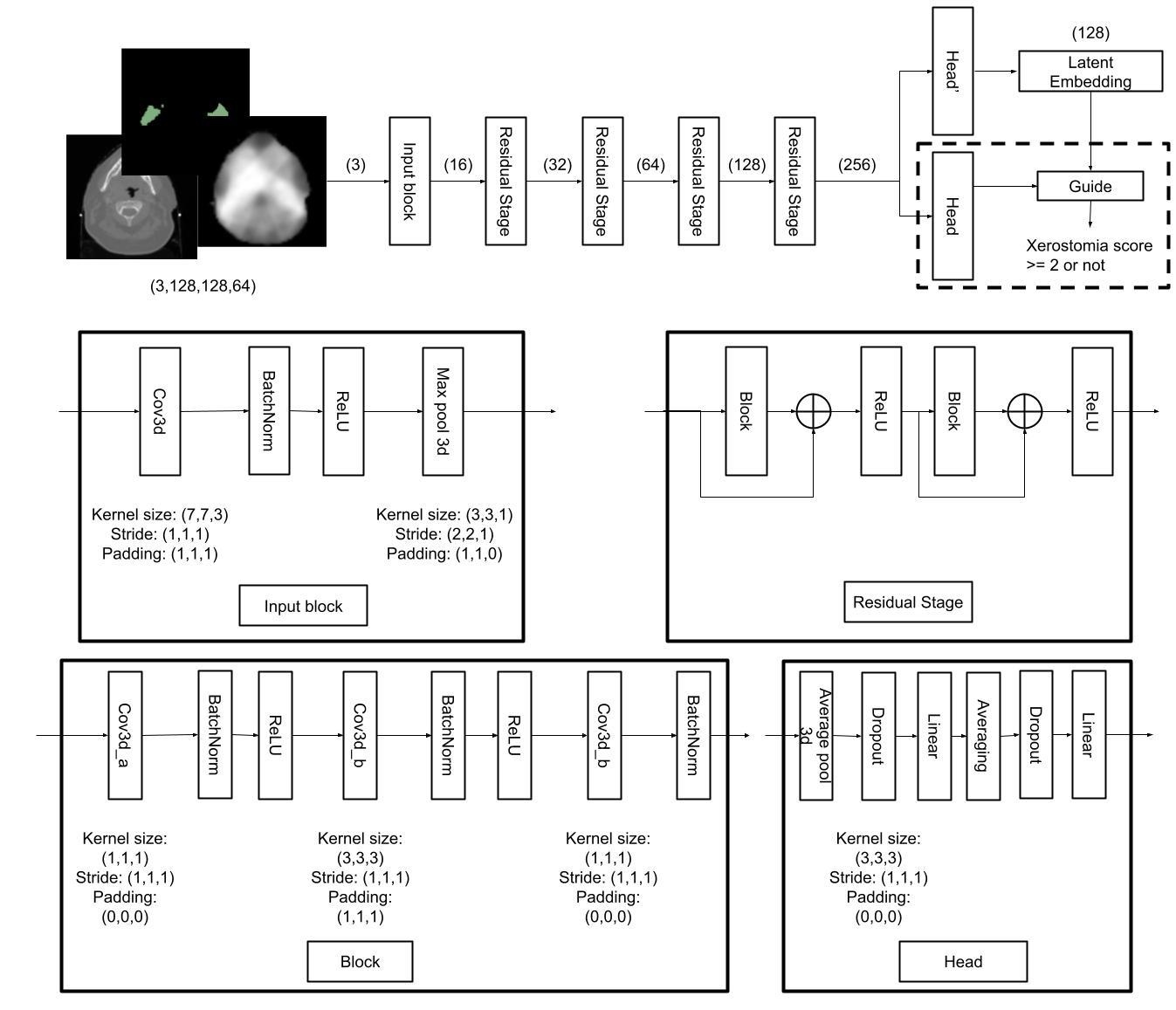

Deep learning xerostomia prediction model with anatomy normalization and high-resolution class activation map

We develop an interpretable deep learning model for xerostomia prediction using anatomy normalization and high-resolution class activation maps for improved spatial interpretability.

Bohua Wan, T. McNutt, H. Quon, J. Lee

Proc. SPIE Medical Imaging 2025 (2025).

Deep learning prediction of radiation-induced xerostomia with supervised contrastive pre-training and cluster-guided loss

We propose a novel deep learning framework for predicting radiation-induced xerostomia using supervised contrastive pre-training and cluster-guided loss.

Bohua Wan, T. McNutt, R. Ger, H. Quon, J. Lee

Proc. SPIE Medical Imaging 2024 (2024).

Spatial-temporal attention for video-based assessment of intraoperative surgical skill

We propose a spatial-temporal attention mechanism for automated surgical skill assessment from intraoperative videos, enabling objective evaluation of surgical performance.

Bohua Wan, M. Peven, G. Hager, S. Sikder, S. S. Vedula

Scientific Reports (2024).

Combining ADDA with Deep CORAL: Unsupervised Domain Adaptation for Image Classification

We combine Adversarial Discriminative Domain Adaptation (ADDA) with Deep CORAL to allow ADDA better utilize the pretrained initialization. Vanilla ADDA diverses drastically from the initialization resulting much poorer results in early epochs comparing to the initialization. It requires sophisticated fine-tuning for ADDA to give satisfying results. With our novel modifications ADDA-CORAL can be trained extremely faster and yields better results.

Bohua Wan, Cong Mu, Ruzhang Zhao, Zhuoying Li (Ordered by alphabetic)

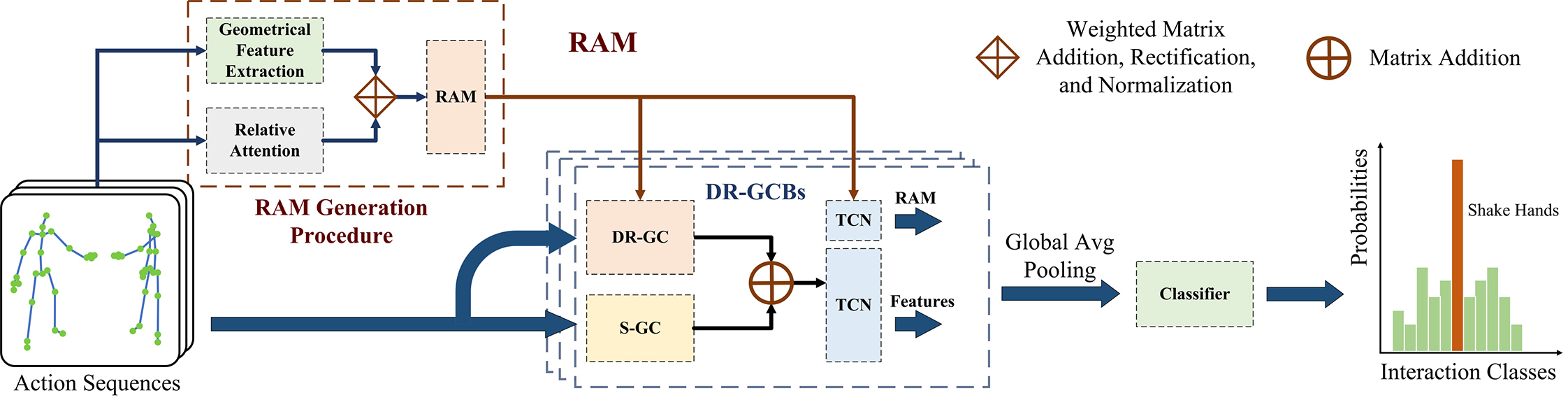

Dyadic Relational Graph Convolutional Networks for Skeleton-based Human Interaction Recognition

We apply Graph Convolutional Networks on skeleton-based human-human interaction recognitions. We designed a Relational Adjacency Matrix (RAM) to represent dynamic relational graphs on the two actor's skeletons.

Liping Zhu*, Bohua Wan*, Chengyang Li, Gangyi Tian, Yi Hou, Kun Yuan

Pattern Recognition 115 (2021): 107920.